sources

Powered by Perlanet

Watched on Tuesday June 9, 2026.

Public Identifiers, UUIDs and a Tiny SEO Fix

A recent question from my friend and colleague Mohammad got me thinking about the way we identify data in web applications.

While working on the DBIC component of a REST API, he came across the term enumeration attack. In this type of attack, an attacker systematically guesses resource identifiers in order to access data they shouldn’t be able to see.

For example, if your API exposes URLs like this:

GET /users/123 GET /users/124 GET /users/125

then it’s easy for someone to try a large range of identifiers and see what they get back.

Mohammad’s question was simple:

Should we replace sequential IDs with UUIDs? And if we do, should we index the UUID column?

As is often the case, the answer turned out to be “it depends”.

Two Different Types of Data

The first thing I realised is that not all data objects have the same requirements.

Some objects are naturally public.

For example, books on a publishing website are intended to be discovered. In fact, you probably want people to be able to guess their URLs:

/books/design-patterns-in-modern-perl

In this case, a human-readable slug makes perfect sense. Other objects are private by nature. User accounts, orders, invoices and API resources generally shouldn’t be enumerable. In those cases, a UUID is often a better choice:

/users/550e8400-e29b-41d4-a716-446655440000

The important observation is that slugs and UUIDs solve different problems.

- Slugs are for humans (and, perhaps, search engines).

- UUIDs are for machines.

Database Design

A common question is whether a UUID should replace the primary key.

In most cases, I don’t think it should.

My preferred design is:

CREATE TABLE users ( id BIGINT PRIMARY KEY, uuid UUID NOT NULL UNIQUE );

The integer primary key remains the internal identifier used for joins and foreign keys.

The UUID becomes the public identifier exposed through APIs.

This gives you the best of both worlds:

- Small, efficient foreign keys.

- Fast joins.

- Unguessable public identifiers.

If the application regularly searches by UUID then the UUID column should be indexed. In practice, declaring it UNIQUE will usually create the appropriate index automatically.

The Hybrid Approach

Thinking about this reminded me that many large sites use a hybrid approach.

Amazon product URLs contain both a human-readable title and a stable identifier:

/Design-Patterns-Modern-Perl/dp/B0XXXXX123

The ASIN is what really identifies the product.

The title is there for humans.

Stack Overflow does something similar:

/questions/12345/how-do-i-index-a-uuid-column

Again, the question ID is authoritative. The title is helpful context.

My Line of Succession website uses the same idea.

A person page looks like this:

/p/2b5998-the-prince-william-prince-of-wales

The important part is the identifier:

2b5998

The rest is descriptive text.

This turns out to be particularly useful for royalty because titles change constantly. Someone might be “Prince William”, then “The Prince of Wales”, and eventually “King William V”.

By separating identity from presentation, old links continue to work regardless of title changes.

A Tiny Bug

While thinking about all of this, I discovered a small bug in Line of Succession.

The site allows any descriptive text after the identifier. These URLs all resolve to the same person:

/p/2b5998-the-prince-william-prince-of-wales /p/2b5998-prince-billy /p/2b5998-fred

The application correctly ignores the descriptive text and uses only the identifier.

However, there was a problem.

The page was generating its canonical URL from the incoming request path rather than from the person record.

That meant a request for:

/p/2b5998-prince-billy

generated:

<link rel="canonical"

href="https://lineofsuccession.co.uk/p/2b5998-prince-billy">which is obviously not the canonical URL.

The fix was surprisingly small:

sub canonical( $self ) {

if ($self->request->is_date_page) {

return '/' . $self->canonical_date;

+ } elsif($self->request->is_person_page) {

+ return '/p/' . $self->request->person->slug;

} else {

return $self->request->path;

}

}At the same time I simplified another method by making it reuse the canonical URL logic.

The result was a six-line patch that fixed the SEO issue and made the code slightly cleaner.

Those are my favourite kinds of fixes.

Future Improvements

The fix also revealed an emerging abstraction in the code.

At the moment, various parts of the application know how to construct URLs for different object types.

A cleaner approach would be to give objects responsibility for generating their own URLs.

I’m considering a HasURL role that would require an object to provide an identifier and optionally a prefix, and then build the URL automatically.

That’s a job for another day.

For now, a small question about UUIDs led to a useful discussion about public identifiers, a review of URL design, and a tiny production fix. Not bad for an afternoon’s work.

The post Public Identifiers, UUIDs and a Tiny SEO Fix first appeared on Perl Hacks.

Watched on Thursday June 4, 2026.

Watched on Wednesday June 3, 2026.

Watched on Tuesday June 2, 2026.

One of the more interesting additions I’ve made recently to the Line of Succession website is support for the Model Context Protocol (MCP).

If you’ve spent any time around AI tooling recently, you’ve probably seen people talking about MCP. It’s often described as “USB for AI”, which is perhaps a little overblown, but the basic idea is sound. MCP provides a standard way for AI assistants to discover and use external tools and data sources.

In practical terms, it means that instead of building bespoke integrations for ChatGPT, Claude, Gemini and whatever comes next, you expose a standard MCP endpoint and let the AI clients do the rest.

For a data-driven site like Line of Succession, that seemed like an obvious experiment.

What is MCP?

The Model Context Protocol was originally developed by Anthropic and has rapidly become one of the emerging standards in the AI ecosystem.

An MCP server exposes:

- Information about itself

- A list of available tools

- Schemas describing how those tools should be called

- The results returned by those tools

An AI client can connect to the server, discover the available tools and invoke them when needed.

Instead of scraping web pages or attempting to infer information from HTML, the AI gets access to structured data.

That’s exactly the kind of thing Line of Succession is good at.

Why Add MCP?

The site already exposes information through a traditional web interface and a JSON API.

But those interfaces were designed for humans and developers respectively.

MCP gives AI systems a much cleaner integration point.

For example, an AI assistant can now answer questions like:

- Who was the British sovereign on 14 November 1948?

- What did the line of succession look like in 1980?

- Who was next in line when Queen Victoria died?

without having to scrape pages or understand the site’s internal URLs.

More importantly, it ensures that the information comes directly from the same database that powers the website.

The AI isn’t guessing.

It’s querying the source of truth.

As someone who runs a reference website, that’s a pretty attractive proposition.

The Initial Design

My first goal was to keep things simple.

Rather than exposing dozens of narrowly-focused tools, I started with just two:

sovereign_on_dateline_of_succession

Those two tools cover a surprisingly large proportion of the questions people are likely to ask.

The first returns the sovereign reigning on a given date. The second returns the line of succession for a specified date, with a configurable limit on the number of entries returned.

The implementation currently caps the list at thirty people. That’s enough for most use cases while preventing someone from accidentally asking for all six thousand people currently in the line of succession.

One thing I learned quite quickly is that MCP isn’t really about exposing huge amounts of data. It’s about exposing useful questions that can be answered from your data.

MCP Is Mostly JSON-RPC

One thing that surprised me when I first started reading the specification was how little protocol code is actually required.

At its core, MCP uses JSON-RPC.

A client sends requests like:

{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/list"

}

and the server responds with:

{

"jsonrpc": "2.0",

"id": 1,

"result": {

...

}

}

Once I’d written helper methods for creating standard JSON-RPC responses, most of the complexity disappeared.

The MCP module contains methods like:

sub rpc_result ($self, $id, $result)

and:

sub rpc_error ($self, $id, $code, $message)

which means the Dancer route handlers remain pleasantly small.

The protocol logic lives in one place and the web application simply delegates to it.

Separating the MCP Logic

I didn’t want protocol-specific code scattered throughout the web application.

Instead, I created a dedicated module:

package Succession::MCP;

This module is responsible for:

- Initialisation

- Tool discovery

- Tool execution

- JSON-RPC response generation

- Error handling

That keeps the Dancer routes thin and makes the MCP implementation easier to test independently.

It also means that if I ever decide to expose the same MCP server through a different transport mechanism, most of the work is already done.

Tool Calls Are Mostly Adapters

One pleasant surprise was how little new application logic I actually had to write.

The MCP server needs to expose tools, but those tools ultimately just answer questions about the succession database. The code to answer those questions already existed.

For example, the application’s model layer already contained methods such as:

sovereign_on_date()line_of_succession()

These methods power parts of the website itself, so they already encapsulate all of the business rules and database queries.

The MCP implementation simply acts as an adapter.

When a tool call arrives, the server extracts the arguments, validates them and passes them to the existing model methods:

sub _call_tool ($self, $tool_name, $args) {

my $tool = $self->_tool_dispatch->{$tool_name};

return $tool->($args);

}

The tool implementations themselves are deliberately thin:

sub sovereign_on_date ($self, $args) {

my $date = $args->{date};

my $sovereign = $self->model->sovereign_on_date($date);

...

}

That’s exactly how I wanted it to work.

The MCP layer doesn’t know how to calculate a line of succession or determine who was sovereign on a particular date. It simply knows how to expose those capabilities through the protocol.

This is one of the advantages of adding MCP to an existing application. If your business logic is already cleanly separated from your web interface, an MCP server often becomes surprisingly straightforward to implement.

In many ways, adding MCP feels less like building a new application and more like adding another interface alongside the website and API.

The YAML Epiphany

The most interesting design decision came a little later.

Initially, the tool definitions lived in Perl data structures.

That worked, but it quickly became obvious that I was duplicating information.

The MCP server needed tool descriptions.

The documentation page needed tool descriptions.

The schemas needed to be defined somewhere.

And every change required updating multiple places.

The obvious answer was to move all of the tool definitions into a YAML file.

The MCP module now loads its tool definitions at startup:

sub _build__tools ($self) { return LoadFile($self->tools_file); }

The result is a single source of truth.

The same YAML file drives:

- The

tools/listresponse - Tool metadata

- JSON schemas

- Human-readable documentation

Adding a new tool now involves updating one file and writing the code that implements it.

Everything else follows automatically.

Here’s the current YAML file:

# data/mcp-tools.yml

- name: sovereign_on_date

description: Return the British sovereign on a given date.

documentation: |

Looks up the reigning British sovereign for the supplied date.

Use this when answering questions such as “Who was sovereign on

6 February 1952?”

inputSchema:

type: object

properties:

date:

type: string

description: Date in YYYY-MM-DD format.

required:

- date

- name: line_of_succession

description: Return the line of succession on a given date.

documentation: |

Returns people in the line of succession.

If no date is supplied, the current line of succession is returned.

inputSchema:

type: object

properties:

date:

type: string

description: Optional date in YYYY-MM-DD format. Omit for the current line of succession.

limit:

type: integer

description: Maximum number of successors to return.

minimum: 1

maximum: 100

required: []

Looking back, this is probably the part of the design I’m happiest with. It feels very Perl-ish: keep configuration as data and avoid duplicating information wherever possible.

Human Documentation Matters

One thing I noticed while exploring other MCP servers is that many of them are effectively invisible to humans.

You know an endpoint exists.

You know it speaks MCP.

But unless you inspect the protocol responses manually, you don’t really know what it does.

I decided to add a conventional web page at /mcp.

The page lists all available tools, their descriptions and their schemas.

The nice part is that there is no duplicated documentation.

The page is generated from the same YAML definitions used by the MCP server itself.

If I add a new tool tomorrow, both the machine-readable and human-readable views update automatically.

Structured Data and Text Responses

Another nice feature of MCP is that tool results can include both structured data and human-readable text.

For example, a tool response might contain:

{

"content": [ {

"type": "text",

"text": "The sovereign on 14 November 1948 was George VI."

} ],

"structuredContent": {

...

}

}

The structured content is useful for software.

The text is useful for humans and language models.

Both are generated from the same underlying data.

That gives AI clients flexibility while ensuring consistency.

Getting Listed

Once everything was working, I submitted the server to the MCP directory at mcpservers.org.

That might seem like a small step, but discoverability is important.

An MCP server hidden on a random website isn’t much use if nobody knows it exists.

Directories like that are rapidly becoming the equivalent of API catalogues for the AI era.

Being listed means developers and AI enthusiasts can find the service without first discovering the website.

Was It Worth It?

Absolutely.

The amount of code required was surprisingly small. Most of the work wasn’t implementing the protocol; it was deciding how best to expose the data.

More importantly, it opens the site up to an entirely new audience: AI agents.

Historically, websites were built for humans and APIs were built for developers.

MCP introduces a third category: services designed specifically for AI systems.

For a structured-data site like Line of Succession, that’s a natural fit.

Will MCP still be the dominant standard in five years’ time? I have no idea. The AI industry changes too quickly to make confident predictions.

But right now it has significant momentum, broad industry support and a growing ecosystem of tools.

And if nothing else, it’s rather satisfying to ask an AI who was on the throne on a particular date and know that the answer came directly from my database rather than from whatever the model happened to remember.

The post Teaching AI About the British Monarchy with MCP first appeared on Perl Hacks.

One of the more interesting additions I’ve made recently to the Line of Succession website is support for the Model Context Protocol (MCP).

If you’ve spent any time around AI tooling recently, you’ve probably seen people talking about MCP. It’s often described as “USB for AI”, which is perhaps a little overblown, but the basic idea is sound. MCP provides a standard way for AI assistants to discover and use external tools and data sources.

In practical terms, it means that instead of building bespoke integrations for ChatGPT, Claude, Gemini and whatever comes next, you expose a standard MCP endpoint and let the AI clients do the rest.

For a data-driven site like Line of Succession, that seemed like an obvious experiment.

What is MCP?

The Model Context Protocol was originally developed by Anthropic and has rapidly become one of the emerging standards in the AI ecosystem.

An MCP server exposes:

- Information about itself

- A list of available tools

- Schemas describing how those tools should be called

- The results returned by those tools

An AI client can connect to the server, discover the available tools and invoke them when needed.

Instead of scraping web pages or attempting to infer information from HTML, the AI gets access to structured data.

That’s exactly the kind of thing Line of Succession is good at.

Why Add MCP?

The site already exposes information through a traditional web interface and a JSON API.

But those interfaces were designed for humans and developers respectively.

MCP gives AI systems a much cleaner integration point.

For example, an AI assistant can now answer questions like:

- Who was the British sovereign on 14 November 1948?

- What did the line of succession look like in 1980?

- Who was next in line when Queen Victoria died?

without having to scrape pages or understand the site’s internal URLs.

More importantly, it ensures that the information comes directly from the same database that powers the website.

The AI isn’t guessing.

It’s querying the source of truth.

As someone who runs a reference website, that’s a pretty attractive proposition.

The Initial Design

My first goal was to keep things simple.

Rather than exposing dozens of narrowly-focused tools, I started with just two:

sovereign_on_dateline_of_succession

Those two tools cover a surprisingly large proportion of the questions people are likely to ask.

The first returns the sovereign reigning on a given date. The second returns the line of succession for a specified date, with a configurable limit on the number of entries returned.

The implementation currently caps the list at thirty people. That’s enough for most use cases while preventing someone from accidentally asking for all six thousand people currently in the line of succession.

One thing I learned quite quickly is that MCP isn’t really about exposing huge amounts of data. It’s about exposing useful questions that can be answered from your data.

MCP Is Mostly JSON-RPC

One thing that surprised me when I first started reading the specification was how little protocol code is actually required.

At its core, MCP uses JSON-RPC.

A client sends requests like:

{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/list"

}

and the server responds with:

{

"jsonrpc": "2.0",

"id": 1,

"result": {

...

}

}

Once I’d written helper methods for creating standard JSON-RPC responses, most of the complexity disappeared.

The MCP module contains methods like:

sub rpc_result ($self, $id, $result)

and:

sub rpc_error ($self, $id, $code, $message)

which means the Dancer route handlers remain pleasantly small.

The protocol logic lives in one place and the web application simply delegates to it.

Separating the MCP Logic

I didn’t want protocol-specific code scattered throughout the web application.

Instead, I created a dedicated module:

package Succession::MCP;

This module is responsible for:

- Initialisation

- Tool discovery

- Tool execution

- JSON-RPC response generation

- Error handling

That keeps the Dancer routes thin and makes the MCP implementation easier to test independently.

It also means that if I ever decide to expose the same MCP server through a different transport mechanism, most of the work is already done.

Tool Calls Are Mostly Adapters

One pleasant surprise was how little new application logic I actually had to write.

The MCP server needs to expose tools, but those tools ultimately just answer questions about the succession database. The code to answer those questions already existed.

For example, the application’s model layer already contained methods such as:

sovereign_on_date()line_of_succession()

These methods power parts of the website itself, so they already encapsulate all of the business rules and database queries.

The MCP implementation simply acts as an adapter.

When a tool call arrives, the server extracts the arguments, validates them and passes them to the existing model methods:

sub _call_tool ($self, $tool_name, $args) {

my $tool = $self->_tool_dispatch->{$tool_name};

return $tool->($args);

}

The tool implementations themselves are deliberately thin:

sub sovereign_on_date ($self, $args) {

my $date = $args->{date};

my $sovereign = $self->model->sovereign_on_date($date);

...

}

That’s exactly how I wanted it to work.

The MCP layer doesn’t know how to calculate a line of succession or determine who was sovereign on a particular date. It simply knows how to expose those capabilities through the protocol.

This is one of the advantages of adding MCP to an existing application. If your business logic is already cleanly separated from your web interface, an MCP server often becomes surprisingly straightforward to implement.

In many ways, adding MCP feels less like building a new application and more like adding another interface alongside the website and API.

The YAML Epiphany

The most interesting design decision came a little later.

Initially, the tool definitions lived in Perl data structures.

That worked, but it quickly became obvious that I was duplicating information.

The MCP server needed tool descriptions.

The documentation page needed tool descriptions.

The schemas needed to be defined somewhere.

And every change required updating multiple places.

The obvious answer was to move all of the tool definitions into a YAML file.

The MCP module now loads its tool definitions at startup:

sub _build__tools ($self) { return LoadFile($self->tools_file); }

The result is a single source of truth.

The same YAML file drives:

- The

tools/listresponse - Tool metadata

- JSON schemas

- Human-readable documentation

Adding a new tool now involves updating one file and writing the code that implements it.

Everything else follows automatically.

Here’s the current YAML file:

# data/mcp-tools.yml

- name: sovereign_on_date

description: Return the British sovereign on a given date.

documentation: |

Looks up the reigning British sovereign for the supplied date.

Use this when answering questions such as “Who was sovereign on

6 February 1952?”

inputSchema:

type: object

properties:

date:

type: string

description: Date in YYYY-MM-DD format.

required:

- date

- name: line_of_succession

description: Return the line of succession on a given date.

documentation: |

Returns people in the line of succession.

If no date is supplied, the current line of succession is returned.

inputSchema:

type: object

properties:

date:

type: string

description: Optional date in YYYY-MM-DD format. Omit for the current line of succession.

limit:

type: integer

description: Maximum number of successors to return.

minimum: 1

maximum: 100

required: []

Looking back, this is probably the part of the design I’m happiest with. It feels very Perl-ish: keep configuration as data and avoid duplicating information wherever possible.

Human Documentation Matters

One thing I noticed while exploring other MCP servers is that many of them are effectively invisible to humans.

You know an endpoint exists.

You know it speaks MCP.

But unless you inspect the protocol responses manually, you don’t really know what it does.

I decided to add a conventional web page at /mcp.

The page lists all available tools, their descriptions and their schemas.

The nice part is that there is no duplicated documentation.

The page is generated from the same YAML definitions used by the MCP server itself.

If I add a new tool tomorrow, both the machine-readable and human-readable views update automatically.

Structured Data and Text Responses

Another nice feature of MCP is that tool results can include both structured data and human-readable text.

For example, a tool response might contain:

{

"content": [ {

"type": "text",

"text": "The sovereign on 14 November 1948 was George VI."

} ],

"structuredContent": {

...

}

}

The structured content is useful for software.

The text is useful for humans and language models.

Both are generated from the same underlying data.

That gives AI clients flexibility while ensuring consistency.

Getting Listed

Once everything was working, I submitted the server to the MCP directory at mcpservers.org.

That might seem like a small step, but discoverability is important.

An MCP server hidden on a random website isn’t much use if nobody knows it exists.

Directories like that are rapidly becoming the equivalent of API catalogues for the AI era.

Being listed means developers and AI enthusiasts can find the service without first discovering the website.

Was It Worth It?

Absolutely.

The amount of code required was surprisingly small. Most of the work wasn’t implementing the protocol; it was deciding how best to expose the data.

More importantly, it opens the site up to an entirely new audience: AI agents.

Historically, websites were built for humans and APIs were built for developers.

MCP introduces a third category: services designed specifically for AI systems.

For a structured-data site like Line of Succession, that’s a natural fit.

Will MCP still be the dominant standard in five years’ time? I have no idea. The AI industry changes too quickly to make confident predictions.

But right now it has significant momentum, broad industry support and a growing ecosystem of tools.

And if nothing else, it’s rather satisfying to ask an AI who was on the throne on a particular date and know that the answer came directly from my database rather than from whatever the model happened to remember.

The post Teaching AI About the British Monarchy with MCP first appeared on Perl Hacks.

Watched on Friday May 29, 2026.

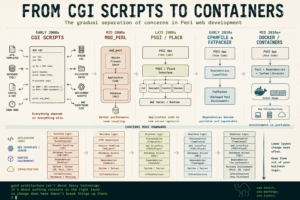

One of the defining characteristics of a good programmer is an instinct for keeping implementation details in the correct layer of an application.

That sounds abstract, but it turns out to explain a huge amount of the progress we’ve made in software development over the last twenty-five years.

And nowhere is that clearer than in Perl web development.

Many of us who built web applications during the dotcom boom spent years learning this lesson the hard way.

We wrote CGI programs that:

- parsed HTTP requests

- generated HTML by hand

- connected directly to databases

- embedded SQL inline

- mixed business logic with presentation

- relied on Apache behaviour

- assumed specific filesystem layouts

- and often only worked on one particular server configuration

It all worked. Until it didn’t.

The history of Perl web development is, in many ways, the history of gradually moving implementation details into more appropriate architectural layers.

The Early CGI Years

Early Perl CGI applications were often a single giant script.

You’d open a file and see:

- request handling

- authentication

- HTML generation

- SQL queries

- business logic

- configuration

- deployment assumptions

…all mixed together in a glorious ball of mud.

Something like this:

#!/usr/bin/perl

use CGI;

use DBI;

my $cgi = CGI->new;

print $cgi->header;

print "<html><body>";

my $dbh = DBI->connect(

"dbi:mysql:test",

"user",

"pass"

);

my $sth = $dbh->prepare(

"select * from users where id = ?"

);

$sth->execute($cgi->param('id'));

while (my $row = $sth->fetchrow_hashref) {

print "<h1>$row->{name}</h1>";

}

print "</body></html>";At the time, this felt perfectly normal.

And to be fair, it was a huge step forward from static HTML sites.

But the design had a fundamental problem:

Everything knew too much about everything else.

The application logic knew:

- how HTTP worked

- how HTML worked

- how Apache launched CGI scripts

- how the database worked

- how the operating system was configured

Every concern leaked into every other concern.

That made systems:

- hard to test

- hard to reuse

- hard to deploy

- hard to scale

- and terrifying to change

The First Big Lesson: Put Logic in Libraries

One of the first signs of a developer maturing is the realisation that application logic should live in reusable modules, not in front-end scripts.

Instead of this:

if ($user->{status} eq 'gold') {

$discount = 0.2;

}being embedded directly in a CGI script, it becomes:

my $discount = $user->discount_rate;

That sounds like a small change, but architecturally it’s enormous.

Now the business logic lives in a library.

And once that happens, several good things follow automatically.

Multiple Interfaces Become Possible

If the logic is in modules, then:

- a web front-end

- a CLI tool

- a REST API

- a cron job

- a queue worker

…can all use the same underlying code.

The interface layer becomes thin.

The application itself becomes independent of how users interact with it.

That’s a huge increase in flexibility.

Testing Becomes Easier

Testing CGI scripts was always awkward.

Testing modules is straightforward.

You can instantiate objects, call methods, and inspect results without needing a web server or HTTP requests.

The easier code is to test, the more likely it is to be tested.

And tested code tends to survive longer.

Deployment Becomes Safer

Once the core behaviour is isolated from the interface layer, replacing the interface becomes far less risky.

You can redesign the UI without rewriting the application.

That separation is one of the foundations of maintainable software.

The PSGI Revolution

The next big architectural leap in Perl web development came with PSGI and Plack.

Younger developers may not fully appreciate how painful web deployment used to be.

In the early 2000s, moving an application between hosting environments could require substantial rewrites.

- A CGI application worked one way.

- A mod_perl application worked another way.

- FastCGI had its own quirks.

- Embedded Apache handlers behaved differently again.

Many Perl developers spent years repeatedly rewriting applications simply because deployment environments changed.

That was madness.

The deployment model is an operational concern.

It should not affect application architecture.

PSGI fixed this by defining a standard interface between web applications and web servers.

The core idea was beautifully simple:

A web application is just a function that receives an environment and returns a response.

Once that abstraction existed, applications no longer cared whether they were running:

- as CGI

- under mod_perl

- inside FastCGI

- under Starman

- behind nginx

- on a development laptop

- or inside a cloud container

The deployment details moved down a layer.

Exactly where they belonged.

This was one of the most important architectural improvements Perl web development ever made.

And it reflected a broader truth:

Good abstractions stop lower-level implementation details leaking upward.

The Transitional Era: FatPacker and cpanfile

There was also an interesting intermediate stage between traditional Perl deployments and full containerisation.

For years, one of the hardest parts of deploying Perl applications was dependency management.

You’d move an application to a new server and discover:

- the wrong module version

- missing XS libraries

- incompatible Perl versions

- or an entire dependency tree that worked perfectly on the developer’s machine and nowhere else

Large parts of Perl deployment culture evolved around coping with this problem.

Tools like cpanfile improved things by making dependencies explicit and reproducible.

Instead of vaguely documenting requirements in a README, applications could formally declare:

requires 'Dancer2'; requires 'DBIx::Class'; requires 'Template';

That may seem obvious now, but it was a major improvement in deployment reliability.

Then tools like App::FatPacker went even further by packaging dependencies directly alongside applications.

Instead of relying on the target server’s Perl environment, applications could carry much of their runtime context with them.

These tools didn’t completely solve deployment portability:

- system libraries still mattered

- Perl versions still mattered

- operating system differences still mattered

…but they represented an important shift in thinking.

The industry was gradually realising that:

- deployment environments were part of the application

- reproducibility mattered

- and infrastructure assumptions needed to be controlled

Containers eventually pushed this idea to its logical conclusion by packaging not just Perl dependencies, but the entire runtime environment.

In hindsight, tools like cpanfile and FatPacker were stepping stones toward modern container-based deployment models.

Containers Are the Same Idea Again

Docker and containers are simply the same architectural principle repeated one layer lower.

Before containers, deployments were often fragile and highly environment-specific.

Applications depended on:

- particular Linux distributions

- specific Perl versions

- installed system libraries

- hand-configured servers

- undocumented setup steps

Developers became experts in “works on my machine”.

Operations teams became experts in swearing.

Containers changed the model.

Instead of deploying:

- source code

…you deploy:

- a complete runtime environment

Now the application no longer cares whether it runs:

- on bare metal

- on a VPS

- in Kubernetes

- in ECS

- in Cloud Run

- or on someone’s laptop

Again:

- infrastructure concerns move downward

- application concerns stay upward

The boundaries become cleaner.

The Pattern Repeats Everywhere

Once you notice this pattern, you see it throughout software engineering.

Templates

Template systems separate:

- presentation

from

- application logic

HTML should not contain database code.

Business logic should not contain giant blobs of HTML.

ORMs and Database Layers

DBI separates applications from database engines.

ORMs separate applications from raw SQL structure.

Again:

- implementation details move downward

Configuration

Configuration belongs outside code.

Deployment-specific values should not be embedded in applications.

APIs

Clients should not care whether data comes from:

- PostgreSQL

- Redis

- another service

- a queue

- flat files

- or magic elves

That’s the implementation’s problem.

The Goal Is Not Abstraction for Its Own Sake

Of course, experienced developers also know that abstractions can become ridiculous.

Some abstractions simplify systems.

Others merely hide complexity behind six additional layers of YAML.

Joel Spolsky’s “Law of Leaky Abstractions” remains painfully relevant.

The goal is not abstraction itself.

The goal is to isolate genuinely volatile details.

Good abstractions protect systems from change.

Bad abstractions merely obscure reality.

The Real Skill

The deeper lesson here is that software architecture is largely about deciding:

“What belongs where?”

Experienced developers develop an instinct for:

- which details are likely to change

- which layers should know about which concerns

- and where boundaries should exist

That instinct is often more important than language choice, frameworks, or technology stacks.

And if you spent the early 2000s rewriting CGI applications to run under mod_perl, you probably learned that lesson the hard way.

The post The Long Road from CGI to Containers first appeared on Perl Hacks.

One of the defining characteristics of a good programmer is an instinct for keeping implementation details in the correct layer of an application.

That sounds abstract, but it turns out to explain a huge amount of the progress we’ve made in software development over the last twenty-five years.

And nowhere is that clearer than in Perl web development.

Many of us who built web applications during the dotcom boom spent years learning this lesson the hard way.

We wrote CGI programs that:

- parsed HTTP requests

- generated HTML by hand

- connected directly to databases

- embedded SQL inline

- mixed business logic with presentation

- relied on Apache behaviour

- assumed specific filesystem layouts

- and often only worked on one particular server configuration

It all worked. Until it didn’t.

The history of Perl web development is, in many ways, the history of gradually moving implementation details into more appropriate architectural layers.

The Early CGI Years

Early Perl CGI applications were often a single giant script.

You’d open a file and see:

- request handling

- authentication

- HTML generation

- SQL queries

- business logic

- configuration

- deployment assumptions

…all mixed together in a glorious ball of mud.

Something like this:

#!/usr/bin/perl

use CGI;

use DBI;

my $cgi = CGI->new;

print $cgi->header;

print "<html><body>";

my $dbh = DBI->connect(

"dbi:mysql:test",

"user",

"pass"

);

my $sth = $dbh->prepare(

"select * from users where id = ?"

);

$sth->execute($cgi->param('id'));

while (my $row = $sth->fetchrow_hashref) {

print "<h1>$row->{name}</h1>";

}

print "</body></html>";

At the time, this felt perfectly normal.

And to be fair, it was a huge step forward from static HTML sites.

But the design had a fundamental problem:

Everything knew too much about everything else.

The application logic knew:

- how HTTP worked

- how HTML worked

- how Apache launched CGI scripts

- how the database worked

- how the operating system was configured

Every concern leaked into every other concern.

That made systems:

- hard to test

- hard to reuse

- hard to deploy

- hard to scale

- and terrifying to change

The First Big Lesson: Put Logic in Libraries

One of the first signs of a developer maturing is the realisation that application logic should live in reusable modules, not in front-end scripts.

Instead of this:

if ($user->{status} eq 'gold') {

$discount = 0.2;

}

being embedded directly in a CGI script, it becomes:

my $discount = $user->discount_rate;

That sounds like a small change, but architecturally it’s enormous.

Now the business logic lives in a library.

And once that happens, several good things follow automatically.

Multiple Interfaces Become Possible

If the logic is in modules, then:

- a web front-end

- a CLI tool

- a REST API

- a cron job

- a queue worker

…can all use the same underlying code.

The interface layer becomes thin.

The application itself becomes independent of how users interact with it.

That’s a huge increase in flexibility.

Testing Becomes Easier

Testing CGI scripts was always awkward.

Testing modules is straightforward.

You can instantiate objects, call methods, and inspect results without needing a web server or HTTP requests.

The easier code is to test, the more likely it is to be tested.

And tested code tends to survive longer.

Deployment Becomes Safer

Once the core behaviour is isolated from the interface layer, replacing the interface becomes far less risky.

You can redesign the UI without rewriting the application.

That separation is one of the foundations of maintainable software.

The PSGI Revolution

The next big architectural leap in Perl web development came with PSGI and Plack.

Younger developers may not fully appreciate how painful web deployment used to be.

In the early 2000s, moving an application between hosting environments could require substantial rewrites.

- A CGI application worked one way.

- A mod_perl application worked another way.

- FastCGI had its own quirks.

- Embedded Apache handlers behaved differently again.

Many Perl developers spent years repeatedly rewriting applications simply because deployment environments changed.

That was madness.

The deployment model is an operational concern.

It should not affect application architecture.

PSGI fixed this by defining a standard interface between web applications and web servers.

The core idea was beautifully simple:

A web application is just a function that receives an environment and returns a response.

Once that abstraction existed, applications no longer cared whether they were running:

- as CGI

- under mod_perl

- inside FastCGI

- under Starman

- behind nginx

- on a development laptop

- or inside a cloud container

The deployment details moved down a layer.

Exactly where they belonged.

This was one of the most important architectural improvements Perl web development ever made.

And it reflected a broader truth:

Good abstractions stop lower-level implementation details leaking upward.

The Transitional Era: FatPacker and cpanfile

There was also an interesting intermediate stage between traditional Perl deployments and full containerisation.

For years, one of the hardest parts of deploying Perl applications was dependency management.

You’d move an application to a new server and discover:

- the wrong module version

- missing XS libraries

- incompatible Perl versions

- or an entire dependency tree that worked perfectly on the developer’s machine and nowhere else

Large parts of Perl deployment culture evolved around coping with this problem.

Tools like cpanfile improved things by making dependencies explicit and reproducible.

Instead of vaguely documenting requirements in a README, applications could formally declare:

requires 'Dancer2';

requires 'DBIx::Class';

requires 'Template';

That may seem obvious now, but it was a major improvement in deployment reliability.

Then tools like App::FatPacker went even further by packaging dependencies directly alongside applications.

Instead of relying on the target server’s Perl environment, applications could carry much of their runtime context with them.

These tools didn’t completely solve deployment portability:

- system libraries still mattered

- Perl versions still mattered

- operating system differences still mattered

…but they represented an important shift in thinking.

The industry was gradually realising that:

- deployment environments were part of the application

- reproducibility mattered

- and infrastructure assumptions needed to be controlled

Containers eventually pushed this idea to its logical conclusion by packaging not just Perl dependencies, but the entire runtime environment.

In hindsight, tools like cpanfile and FatPacker were stepping stones toward modern container-based deployment models.

Containers Are the Same Idea Again

Docker and containers are simply the same architectural principle repeated one layer lower.

Before containers, deployments were often fragile and highly environment-specific.

Applications depended on:

- particular Linux distributions

- specific Perl versions

- installed system libraries

- hand-configured servers

- undocumented setup steps

Developers became experts in “works on my machine”.

Operations teams became experts in swearing.

Containers changed the model.

Instead of deploying:

- source code

…you deploy:

- a complete runtime environment

Now the application no longer cares whether it runs:

- on bare metal

- on a VPS

- in Kubernetes

- in ECS

- in Cloud Run

- or on someone’s laptop

Again:

- infrastructure concerns move downward

- application concerns stay upward

The boundaries become cleaner.

The Pattern Repeats Everywhere

Once you notice this pattern, you see it throughout software engineering.

Templates

Template systems separate:

- presentation

from

- application logic

HTML should not contain database code.

Business logic should not contain giant blobs of HTML.

ORMs and Database Layers

DBI separates applications from database engines.

ORMs separate applications from raw SQL structure.

Again:

- implementation details move downward

Configuration

Configuration belongs outside code.

Deployment-specific values should not be embedded in applications.

APIs

Clients should not care whether data comes from:

- PostgreSQL

- Redis

- another service

- a queue

- flat files

- or magic elves

That’s the implementation’s problem.

The Goal Is Not Abstraction for Its Own Sake

Of course, experienced developers also know that abstractions can become ridiculous.

Some abstractions simplify systems.

Others merely hide complexity behind six additional layers of YAML.

Joel Spolsky’s “Law of Leaky Abstractions” remains painfully relevant.

The goal is not abstraction itself.

The goal is to isolate genuinely volatile details.

Good abstractions protect systems from change.

Bad abstractions merely obscure reality.

The Real Skill

The deeper lesson here is that software architecture is largely about deciding:

“What belongs where?”

Experienced developers develop an instinct for:

- which details are likely to change

- which layers should know about which concerns

- and where boundaries should exist

That instinct is often more important than language choice, frameworks, or technology stacks.

And if you spent the early 2000s rewriting CGI applications to run under mod_perl, you probably learned that lesson the hard way.

The post The Long Road from CGI to Containers first appeared on Perl Hacks.

Someone asked me on LinkedIn recently how to cross-post blog content from a GitHub Pages site to places like Medium, dev.to and LinkedIn.

Someone asked me on LinkedIn recently how to cross-post blog content from a GitHub Pages site to places like Medium, dev.to and LinkedIn.

I started writing a quick reply and, as often happens, it turned into something longer. So here’s the slightly more organised version.

Two different problems

There are actually two separate things people mean when they ask this.

1. Sharing links on social media

This is the easy one.

When I publish a post, I’ll usually share it by:

- pasting the link

- adding a short description

- maybe tweaking the wording per platform

The only slightly technical thing worth doing here is making sure your site has Open Graph tags set up properly.

That ensures:

- the right title appears

- the right description is used

- and, importantly, the right image is shown

If you don’t do this, your carefully written post ends up looking like a random bare link.

What about “Share to…” buttons?

Many blogging platforms (and themes) offer “Share to Facebook/LinkedIn/etc.” buttons.

They:

- generate a pre-filled post

- save a bit of time

I don’t tend to use them – I prefer writing a slightly different intro per platform – but they’re perfectly reasonable if you want something quick and consistent.

2. Reposting the article elsewhere

This is where it gets more interesting.

My usual workflow is:

- write on my own site (WordPress in my case, but GitHub Pages is fine)

- then repost to other platforms

The two I use most are Medium and dev.to – and they take slightly different approaches.

First requirement: you need a web feed

If you want any level of automation, your site needs an RSS/Atom feed.

- WordPress: you get this automatically

- GitHub Pages: depends how your site is built

A lot of people use Jekyll on GitHub Pages (it’s the default and best-supported option), but it’s not the only choice — you can also use other static site generators or even pre-built HTML.

Whichever approach you use, you’ll need to make sure it produces a web feed.

If you’re using Jekyll

Add the official feed plugin:

plugins: - jekyll-feed

GitHub Pages supports this plugin natively, so there’s no extra build step needed.

Your feed will usually be available at:

/feed.xml

If you’re using something else

Most static site generators (Hugo, Eleventy, Astro, etc.) can generate feeds, but:

- it’s often not enabled by default

- the configuration varies

So you’ll need to:

- check the documentation for your tool

- enable or add a feed generator

- confirm the feed URL works

That feed is what tools like dev.to use to discover your posts.

Medium: “Import a story”

Medium has a built-in Import a story feature.

You give it the URL of your original post and it:

- pulls in the content

- recreates the article

- lets you edit it before publishing

It works surprisingly well.

The only slight downside is that it always takes me a few minutes to find the option. It’s on your Stories page (when you’re logged in). Look for the big button in the top right corner.

dev.to: RSS-based automation

dev.to does something a bit cleverer.

You can:

- point it at your RSS/Atom feed

- and it will automatically create draft posts for new articles

A few notes from experience:

- it can take a few hours for drafts to appear

- formatting is usually good but you’ll probably want to tweak it a bit

- it often misses the featured image, so I add that manually

The really important bit: canonical URLs

This is the part that many people miss.

When you repost content, you are technically creating duplicate content – which search engines don’t love.

However…

If you use the official import tools:

- Medium

- dev.to

…they both add a canonical link back to your original post.

That’s the equivalent of saying:

“This content originally lives over here – treat that as the primary version.”

Which means:

- your original site gets the SEO benefit

- you don’t get penalised for duplication

If you’re manually copying and pasting, you need to be careful about this – or at least be aware of the trade-off.

What about LinkedIn?

LinkedIn is a bit different.

As far as I’m aware, there isn’t a clean equivalent of:

- Medium’s import tool

- or dev.to’s RSS integration

It’s worth checking – platforms change – but I haven’t found anything comparable.

So my approach is:

- write a short summary

- link to the original post

That also tends to fit LinkedIn better as a platform.

I’ve seen various guides suggesting ways to automate LinkedIn reposting, but many of them rely on long-dead platforms or brittle integrations. As far as I can tell, there’s no clean, supported equivalent of Medium’s import tool or dev.to’s RSS integration. These days, I treat LinkedIn as a place to promote posts rather than republish them.

Is any of this automated?

A bit, but not completely.

- Medium: semi-automated (import tool)

- dev.to: semi-automated (RSS → draft)

- LinkedIn: manual

You could build a fully automated pipeline with APIs and scripts.

But in practice:

- a small amount of manual editing is useful

- each platform benefits from slightly different positioning

So I don’t try to over-automate it.

The takeaway

There’s no standard protocol for cross-posting blog content.

What you actually have is:

- a few helpful platform features

- a bit of light automation

- and some copy-and-paste

And honestly, that works perfectly well.

Like most things on the web, there’s no single ‘right’ way to do this — just a collection of tools that mostly work if you don’t try to be too clever.

If you’d like help building this kind of thing without overcomplicating it, there’s a brief overview of what I do here:

The post How I Cross-Post Blog Articles (Without Making It Complicated) appeared first on Davblog.

Someone asked me on LinkedIn recently how to cross-post blog content from a GitHub Pages site to places like Medium, dev.to and LinkedIn.

I started writing a quick reply and, as often happens, it turned into something longer. So here’s the slightly more organised version.

Two different problems

There are actually two separate things people mean when they ask this.

1. Sharing links on social media

This is the easy one.

When I publish a post, I’ll usually share it by:

- pasting the link

- adding a short description

- maybe tweaking the wording per platform

The only slightly technical thing worth doing here is making sure your site has Open Graph tags set up properly.

That ensures:

- the right title appears

- the right description is used

- and, importantly, the right image is shown

If you don’t do this, your carefully written post ends up looking like a random bare link.

What about “Share to…” buttons?

Many blogging platforms (and themes) offer “Share to Facebook/LinkedIn/etc.” buttons.

They:

- generate a pre-filled post

- save a bit of time

I don’t tend to use them – I prefer writing a slightly different intro per platform – but they’re perfectly reasonable if you want something quick and consistent.

2. Reposting the article elsewhere

This is where it gets more interesting.

My usual workflow is:

- write on my own site (WordPress in my case, but GitHub Pages is fine)

- then repost to other platforms

The two I use most are Medium and dev.to – and they take slightly different approaches.

First requirement: you need a web feed

If you want any level of automation, your site needs an RSS/Atom feed.

- WordPress: you get this automatically

- GitHub Pages: depends how your site is built

A lot of people use Jekyll on GitHub Pages (it’s the default and best-supported option), but it’s not the only choice — you can also use other static site generators or even pre-built HTML.

Whichever approach you use, you’ll need to make sure it produces a web feed.

If you’re using Jekyll

Add the official feed plugin:

plugins:

- jekyll-feed

GitHub Pages supports this plugin natively, so there’s no extra build step needed.

Your feed will usually be available at:

/feed.xml

If you’re using something else

Most static site generators (Hugo, Eleventy, Astro, etc.) can generate feeds, but:

- it’s often not enabled by default

- the configuration varies

So you’ll need to:

- check the documentation for your tool

- enable or add a feed generator

- confirm the feed URL works

That feed is what tools like dev.to use to discover your posts.

Medium: “Import a story”

Medium has a built-in Import a story feature.

You give it the URL of your original post and it:

- pulls in the content

- recreates the article

- lets you edit it before publishing

It works surprisingly well.

The only slight downside is that it always takes me a few minutes to find the option. It’s on your Stories page (when you’re logged in). Look for the big button in the top right corner.

dev.to: RSS-based automation

dev.to does something a bit cleverer.

You can:

- point it at your RSS/Atom feed

- and it will automatically create draft posts for new articles

A few notes from experience:

- it can take a few hours for drafts to appear

- formatting is usually good but you’ll probably want to tweak it a bit

- it often misses the featured image, so I add that manually

The really important bit: canonical URLs

This is the part that many people miss.

When you repost content, you are technically creating duplicate content – which search engines don’t love.

However…

If you use the official import tools:

- Medium

- dev.to

…they both add a canonical link back to your original post.

That’s the equivalent of saying:

“This content originally lives over here – treat that as the primary version.”

Which means:

- your original site gets the SEO benefit

- you don’t get penalised for duplication

If you’re manually copying and pasting, you need to be careful about this – or at least be aware of the trade-off.

What about LinkedIn?

LinkedIn is a bit different.

As far as I’m aware, there isn’t a clean equivalent of:

- Medium’s import tool

- or dev.to’s RSS integration

It’s worth checking – platforms change – but I haven’t found anything comparable.

So my approach is:

- write a short summary

- link to the original post

That also tends to fit LinkedIn better as a platform.

I’ve seen various guides suggesting ways to automate LinkedIn reposting, but many of them rely on long-dead platforms or brittle integrations. As far as I can tell, there’s no clean, supported equivalent of Medium’s import tool or dev.to’s RSS integration. These days, I treat LinkedIn as a place to promote posts rather than republish them.

Is any of this automated?

A bit, but not completely.

- Medium: semi-automated (import tool)

- dev.to: semi-automated (RSS → draft)

- LinkedIn: manual

You could build a fully automated pipeline with APIs and scripts.

But in practice:

- a small amount of manual editing is useful

- each platform benefits from slightly different positioning

So I don’t try to over-automate it.

The takeaway

There’s no standard protocol for cross-posting blog content.

What you actually have is:

- a few helpful platform features

- a bit of light automation

- and some copy-and-paste

And honestly, that works perfectly well.

Like most things on the web, there’s no single ‘right’ way to do this — just a collection of tools that mostly work if you don’t try to be too clever.

If you’d like help building this kind of thing without overcomplicating it, there’s a brief overview of what I do here:

The post How I Cross-Post Blog Articles (Without Making It Complicated) appeared first on Davblog.

I got a piece of spam the other day.

That’s not unusual, of course. What was unusual was that this one was… good.

Or at least, it was convincingly good.

Here’s what I received:

Hi Dave,

Just a few outbound idea I wanted to share for targeting modern Perl programmers :

• Reach out to senior Perl developers at tech firms, referencing clear, practical ebooks for modern Perl programmers that improve code quality.

• Engage junior Perl programmers at startups, citing real‐world techniques and guidance from authors who use Perl daily to accelerate learning.

• Target Perl maintainers at open‐source projects, highlighting the Design Patterns in Modern Perl book with tested examples that streamline code maintenance.

Btw, if you’re interested, one of our outbound experts would be happy to take a peek your current GTM motion and offer thoughts — no expense to you.

(They probably have even better ideas than I do!)

Worth exploring?

Now, you can see what’s going on here.

Someone has clearly pointed an LLM at my profile on LinkedIn and said something like:

Write a short outreach email with ideas tailored to this person.

And, to be fair, it’s done a decent job.

It knows I work with Perl.

It knows I write books.

It even knows enough to mention Design Patterns in Modern Perl.

Ten years ago, this level of targeting would have required actual effort. Now it’s effectively free.

And that’s the problem.

The illusion of understanding

Because while they’ve superficially got a lot right in their email, there are several fundamental points they’ve missed.

- They concentrate on my work with Perl School Publishing, which is a relatively small part of my work

- They don’t seem to realise that I’m a one-person company and have almost never used marketing of any kind

- They don’t realise that most of my work comes from repeat business or word of mouth

- They don’t realise that I’m semi-retired and only work when I want to

- They don’t realise that I’m the kind of person who would never market his services using spam

That last one feels particularly important.

Because, let’s be completely clear here, what they’re proposing is spam.

Let’s not pretend otherwise

There’s a tendency in the industry to dress this up with nicer language.

“Outbound lead generation.”

“GTM strategy.”

“Targeted outreach.”

But if you strip that away, what remains is:

Sending unsolicited commercial messages to people who have not asked to receive them.

In other words, spam.

You can make it more targeted.

You can make it more personalised.

You can even make it sound thoughtful.

But none of that changes the underlying fact that the recipient didn’t ask for it.

Clever, but not smart

And this is where the use of AI becomes interesting.

Because this email is clearly the product of a system that can:

- Read a profile

- Extract key themes

- Generate plausible, relevant-sounding ideas

It has, in some sense, understood me.

Except it hasn’t.

It knows what I talk about.

It has no idea what I want.

It doesn’t know my situation.

It doesn’t know my preferences.

It doesn’t know my values.

And so it produces something that feels eerily close to being relevant — but completely misses the mark.

The cost of cheap personalisation

The real shift here is economic.

Personalisation used to be expensive.

You had to actually research someone.

You had to think about whether contacting them made sense.

Now, you can do it at scale, for effectively zero cost.

Which means we’re about to see a lot more of this:

- Messages that feel tailored

- Messages that reference your work

- Messages that almost make sense

But are still, fundamentally, just unsolicited outreach.

The bar hasn’t just been lowered. It’s been automated.

Use your powers only for good

None of this is a criticism of the technology.

The ability to take a body of text and generate something that feels this relevant is genuinely impressive.

But like any powerful tool, the question isn’t can you do it.

It’s should you.

If your use of AI boils down to:

How can I send more unsolicited messages, more efficiently?

…then all you’ve really done is scale a bad idea.

A final thought

There was a moment, reading that email, where I thought:

This is almost good enough to work.

And that’s the worrying part.

Not that it’s spam.

But that it’s convincing spam.

If this is where we are now, then the real challenge isn’t filtering out the obvious rubbish.

It’s learning to recognise the messages that sound like they understand you — but don’t.

If you’re curious about the kind of work I actually do (none of which involves outbound lead generation), there’s a brief overview here:

Originally published at https://blog.dave.org.uk on April 26, 2026.

I got a piece of spam the other day.

That’s not unusual, of course. What was unusual was that this one was… good.

Or at least, it was convincingly good.

Here’s what I received:

Hi Dave,

Just a few outbound idea I wanted to share for targeting modern Perl programmers :

- Reach out to senior Perl developers at tech firms, referencing clear, practical ebooks for modern Perl programmers that improve code quality.

- Engage junior Perl programmers at startups, citing real‐world techniques and guidance from authors who use Perl daily to accelerate learning.

- Target Perl maintainers at open‐source projects, highlighting the Design Patterns in Modern Perl book with tested examples that streamline code maintenance.

Btw, if you’re interested, one of our outbound experts would be happy to take a peek your current GTM motion and offer thoughts – no expense to you.

(They probably have even better ideas than I do!)

Worth exploring?

Now, you can see what’s going on here.

Someone has clearly pointed an LLM at my profile on LinkedIn and said something like:

Write a short outreach email with ideas tailored to this person.

And, to be fair, it’s done a decent job.

It knows I work with Perl.

It knows I write books.

It even knows enough to mention Design Patterns in Modern Perl.

Ten years ago, this level of targeting would have required actual effort. Now it’s effectively free.

And that’s the problem.

The illusion of understanding

Because while they’ve superficially got a lot right in their email, there are several fundamental points they’ve missed.

- They concentrate on my work with Perl School Publishing, which is a relatively small part of my work

- They don’t seem to realise that I’m a one-person company and have almost never used marketing of any kind

- They don’t realise that most of my work comes from repeat business or word of mouth

- They don’t realise that I’m semi-retired and only work when I want to

- They don’t realise that I’m the kind of person who would never market his services using spam

That last one feels particularly important.

Because, let’s be completely clear here, what they’re proposing is spam.

Let’s not pretend otherwise

There’s a tendency in the industry to dress this up with nicer language.

“Outbound lead generation.”

“GTM strategy.”

“Targeted outreach.”

But if you strip that away, what remains is:

Sending unsolicited commercial messages to people who have not asked to receive them.

In other words, spam.

You can make it more targeted.

You can make it more personalised.

You can even make it sound thoughtful.

But none of that changes the underlying fact that the recipient didn’t ask for it.

Clever, but not smart

And this is where the use of AI becomes interesting.

Because this email is clearly the product of a system that can:

- Read a profile

- Extract key themes

- Generate plausible, relevant-sounding ideas

It has, in some sense, understood me.

Except it hasn’t.

It knows what I talk about.

It has no idea what I want.

It doesn’t know my situation.

It doesn’t know my preferences.

It doesn’t know my values.

And so it produces something that feels eerily close to being relevant — but completely misses the mark.

The cost of cheap personalisation

The real shift here is economic.

Personalisation used to be expensive.

You had to actually research someone.

You had to think about whether contacting them made sense.

Now, you can do it at scale, for effectively zero cost.

Which means we’re about to see a lot more of this:

- Messages that feel tailored

- Messages that reference your work

- Messages that almost make sense

But are still, fundamentally, just unsolicited outreach.

The bar hasn’t just been lowered. It’s been automated.

Use your powers only for good

None of this is a criticism of the technology.

The ability to take a body of text and generate something that feels this relevant is genuinely impressive.

But like any powerful tool, the question isn’t can you do it.

It’s should you.

If your use of AI boils down to:

How can I send more unsolicited messages, more efficiently?

…then all you’ve really done is scale a bad idea.

A final thought

There was a moment, reading that email, where I thought:

This is almost good enough to work.

And that’s the worrying part.

Not that it’s spam.

But that it’s convincing spam.

If this is where we are now, then the real challenge isn’t filtering out the obvious rubbish.

It’s learning to recognise the messages that sound like they understand you — but don’t.

If you’re curious about the kind of work I actually do (none of which involves outbound lead generation), there’s a brief overview here:

The post Use Your Powers Only for Good, Clark appeared first on Davblog.

Every month, I write a newsletter which (among other things) discusses some of the technical projects I’ve been working on. It’s a useful exercise — partly as a record for other people, but mostly as a way for me to remember what I’ve actually done.

Because, as I’m sure you’ve noticed, it’s very easy to forget.

So this month, I decided to automate it.

(And, if you’re interested in the end result, this is also a good excuse to mention that the newsletter exists. Two birds, one stone.)

The Problem

All of my Git repositories live somewhere under /home/dave/git. Over time, that’s become… less organised than it might be. Some repos are directly under that directory, others are buried a couple of levels down, and I’m fairly sure there are a few I’ve completely forgotten about.

What I wanted was:

- Given a month and a year

- Find all Git repositories under that directory

- Identify which ones had commits in that month

- Summarise the work done in each repo

The first three are straightforward enough. The last one is where things get interesting.

Finding the Repositories

The first step is walking the directory tree and finding .git directories. This is a classic Perl task — File::Find still does exactly what you need.

use v5.40;

use File::Find;

sub find_repos ($root) {

my @repos;

find(

sub {

return unless $_ eq '.git';

push @repos, $File::Find::dir;

},

$root

);

return @repos;

}This gives us a list of repository directories to inspect. It’s simple, robust, and doesn’t require any external dependencies.

(There are, of course, other ways to do this — you could shell out to fd or find, for example — but keeping it in Perl keeps everything nicely self-contained.)

Getting Commits for a Month

For each repo, we can run git log with appropriate date filters.

sub commits_for_month ($repo, $since, $until) {

my $cmd = sprintf(

q{git -C %s log --since="%s" --until="%s" --pretty=format:"%%s"},

$repo, $since, $until

);

my @commits = `$cmd`;

chomp @commits;

return @commits;

}Where

$since and $until define the month we’re interested in. I’ve been using something like:

my $since = "$year-$month-01"; my $until = "$year-$month-31"; # good enough for this purpose

Yes, that’s a bit hand-wavy around month lengths. No, it doesn’t matter in practice. Sometimes “good enough” really is good enough.

A Small Gotcha

It turns out I have a few repositories where I never got around to making a first commit. In that case, git log helpfully explodes with:

fatal: your current branch ‘master’ does not have any commits yet

The fix is simply to ignore failures:

my @commits = `$cmd 2>/dev/null`;

If there are no commits, we just get an empty list and move on. No warnings, no noise.

This is one of those little bits of defensive programming that makes the difference between a script you run once and a script you’re happy to run every month.

Summarising the Work

Once we have a list of commit messages, we can summarise them.

And this is where I cheated slightly.

I used OpenAPI::Client::OpenAI to feed the commit messages into an LLM and ask it to produce a short summary.

Something along these lines:

use OpenAPI::Client::OpenAI;

sub summarise_commits ($commits) {

my $client = OpenAPI::Client::OpenAI->new(

api_key => $ENV{OPENAI_API_KEY},

);

my $text = join "\n", @$commits;

my $response = $client->chat->completions->create({

model => 'gpt-4.1-mini',

messages => [{

role => 'user',

content => "Summarise the following commit messages:\n\n$text",

}],

});

return $response->choices->[0]->message->content;

}Is this overkill? Almost certainly.

Could I have written some heuristics to group and summarise commit messages? Possibly.

Would it have been as much fun? Definitely not.

And in practice, it works remarkably well. Even messy, inconsistent commit messages tend to turn into something that looks like a coherent summary of work.

Putting It Together

For each repo:

- Get commits for the month

- Skip if there are none

- Generate a summary

- Print the repo name and summary

The output looks something like:

my-project ----------- Refactored database layer, added caching, and fixed several edge-case bugs. another-project --------------- Initial scaffolding, basic API endpoints, and deployment configuration.

Which is already a pretty good starting point for a newsletter.

A Nice Side Effect

One unexpected benefit of this approach is that it surfaces projects I’d forgotten about.

Because the script walks the entire directory tree, it finds everything — including half-finished experiments, abandoned ideas, and repos I created at 11pm and never touched again.

Sometimes that’s useful. Sometimes it’s mildly embarrassing.

But it’s always interesting.

What Next?

This is very much a first draft.

It works, but it’s currently a script glued together with shell commands and assumptions about my directory structure. The obvious next step is to:

- Turn it into a proper module

- Add tests

- Clean up the API

- Release it to CPAN

At that point, it becomes something other people might actually want to use — not just a personal tool with hard-coded paths and questionable date handling.

A Future Enhancement

One idea I particularly like is to run this automatically using GitHub Actions.

For example:

- Run monthly

- Generate summaries for that month

- Commit the results to a repository

- Publish them via GitHub Pages

Over time, that would build up a permanent, browsable record of what I’ve been working on.

It’s a nice combination of:

- automation

- documentation

- and a gentle nudge towards accountability

Which is either a fascinating historical archive…